初めてのPWN作り

10月27日はSECCON CTFオンライン予選!

手作りのPWN問題を職場の同僚や友達に送って,日ごろの感謝の気持ちを伝えてみませんか?

材料

- ★Docker 1つ

- xinetd 1つ

- ★git 適量

- ★gcc 1つ

- ★Python2 1つ

- ★pwntools 1つ

- ★vim 3つ

- ★お好みのテキストエディタ 少々

- お好みのLinuxディストリビューション(ArchLinuxを使用) 1つ

下準備

Linuxにアップデートを入れてパッケージマネージャで馴染ませせてから,★を入れて混ぜ合わせる.:

ディストリビューションを確認して,その都度正しいパッケージマネージャでしっかり入れる.

# Arch sudo pacman -Syu && sudo pacman -S docker git gcc-multilib python2 vim python2-pip && sudo pip2 install pwntools

# Ubuntu sudo apt update && sudo apt upgrade && sudo apt install docker-ce git gcc python2 vim python2-pip && sudo pip2 install pwntools

問題作り

1. 生地を用意する

Buffer Over FlowやFormat String Attackなどどんな生地にするかを決める.

2.方法を考える

シェルコードやROPなどどんな方法でやるかを考えて適切なセキュリティ機構を選択する.

3.作る

作る.

【ここでおいしく作るためのワンポイントアドバイス!】

1. ユーザーに何かを入力してもらう前にfflush(stdout);を呼び出そう!バッファされているputs()やprintf()の出力が全部出力されて,解いてくれる人からの好感度もアップ!

2. 攻撃対象の関数はmain()以外の関数にしよう!インストラクションポインタより前にスタックポインタがエラーを吐いてしまうから,main()のretではeipは振り向いてくれないぞ!

#include <stdio.h> void print_flag(void) { // 略 } int in_name(void) { char name[16]; printf("Type Your Name : "); fflush(stdout); scanf("%32s", name); return 0; } int main(void) { in_name(); return 0; }

4.コンパイル

コンパイルをする.

【注意!!】普段のプログラミングでは何気なく使っているこのコンパイラ,PWNを作るときには注意深く気持ちを込めてオプションを決定しよう!

mkdir bin gcc pwn.c -o bin/pwn -m32 -O0 -fno-stack-protector -no-pie -fno-pie

5.完成

問題は完成!

実際に攻撃できるかどうかpwntoolsを使って味見をしてみよう!

動作環境作り

ここまでで問題は完成したけど,ラッピングをして実際に動作するようにしてあげよう!

なんかめんどくさくなってきたけど頑張って!

【なんで環境作るん?】

pwnでルート取らせる問題で実際のルート取られたら大変やろ?

だからDockerでルート取られてもいい環境作るんやで(まる)

別に自分で試すだけなら

socat TCP-LISTEN:8080,reuseaddr,fork EXEC:./pwn

でおk

1.サービス起動

Archだったらこれやる.

sudo systemctl start docker.service

2.設定ファイルの用意

設定ファイル落としてくる

git clone https://github.com/Eadom/ctf_xinetd

ctf.xinetdのserver_args = --userspec=1000:1000 /home/ctf ./helloworld部分をhelloworldから自分の実行ファイル名に書き換える

3.ビルド

ビルドする.

sudo docker build -t "pwn"

4.実行

sudo docker run --rm -d -p "9999:9999" -h "pwn" --name="pwn" pwn

5.接続確認

わーいできたー!

接続確認.

nc localhost 9999

さっき作ったpwntoolsを今度はネットワーク越しに実行

Oh, YEAH !

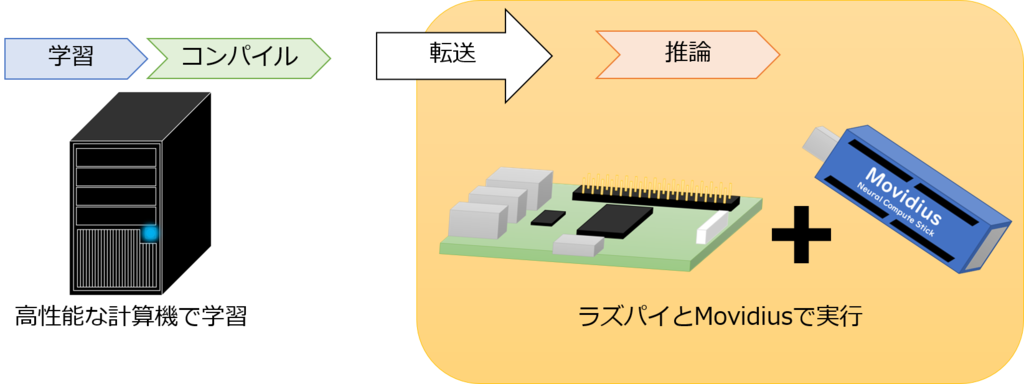

InceptionV3を蒸留してMovidiusで動かす

公開できるレベルではないくらいの雑さ

もっときれいにしたらgithubにアップする予定

テキトーなCNNに蒸留してみる

テキトーなCNN

入力 : 299 x 299 x 3

出力 : caltech101

conv2d(299,299,3)

conv2d(,,32)

conv2d(, , 64)

conv2d(, , 128)

dense(625)

dropout

dense(101)

結果

親

----------------------------------------------

EPOCH 3/3

100%|██████████| 72/72 [00:29<00:00, 2.40it/s]

100%|██████████| 54/54 [00:06<00:00, 7.94it/s]

loss: 0.8792 val accuracy: 0.8924

子

----------------------------------------------

EPOCH 30/30

100%|██████████| 72/72 [00:17<00:00, 4.09it/s]

100%|██████████| 54/54 [00:03<00:00, 16.21it/s]

loss: 7.9115 val accuracy: 0.4034

蒸留なしで子

----------------------------------------------

EPOCH 30/30

100%|██████████| 72/72 [00:10<00:00, 6.81it/s]

100%|██████████| 54/54 [00:03<00:00, 15.68it/s]

loss: 16.6656 val accuracy: 0.4259

負けた

Movidiusで動かす

mvNCProfileで推論の時間だけ測定できた。

299 x 299 x 3の画像で、1枚当たり130.27msらしい

実測値:

Time : 58.298219442367554 (435 images)

1枚当たり134msとのこと

つまり、画像のロードには4msくらいしかかかってない

ちなみに、パラメータ数は83M

まとめ

InceptionV3をファインチューニングして、それを別のネットワークに蒸留したものをMovidiusで動かせるようになった。

InceptionV3をMobilenetV1 224x224 a=1に学習させる

tensorflow/modelsのnetsをインストール

python setup.py install

そしたらロードできるようになる

import nets.mobilenet_v1

で、

stu2_logits, stu2_end_points = nets.mobilenet_v1.mobilenet_v1()

をするときに、得られるstu2_end_pointsにはmobilenetの詳細が入ってる。

これの中身を見るといろいろ分かる。

['Conv2d_0', 'Conv2d_1_depthwise', 'Conv2d_1_pointwise', 'Conv2d_2_depthwise', 'Conv2d_2_pointwise', 'Conv2d_3_depthwise', 'Conv2d_3_pointwise', 'Conv2d_4_depthwise', 'Conv2d_4_pointwise', 'Conv2d_5_depthwise', 'Conv2d_5_pointwise', 'Conv2d_6_depthwise', 'Conv2d_6_pointwise', 'Conv2d_7_depthwise', 'Conv2d_7_pointwise', 'Conv2d_8_depthwise', 'Conv2d_8_pointwise', 'Conv2d_9_depthwise', 'Conv2d_9_pointwise', 'Conv2d_10_depthwise', 'Conv2d_10_pointwise', 'Conv2d_11_depthwise', 'Conv2d_11_pointwise', 'Conv2d_12_depthwise', 'Conv2d_12_pointwise', 'Conv2d_13_depthwise', 'Conv2d_13_pointwise', 'AvgPool_1a', 'Logits', 'Predictions']

モデルを作るときに、出力層の数は指定できるけど、学習済みチェックポイントと一致しないから、変わった部分の重みは読み込まないようにしなくちゃダメ

例えば、上のを見るとLogitsとPredictionsがあやしい

print("shape of logits: ", ep2['Logits'].shape) print("shape of prediction: ", ep2['Predictions'].shape)

気になるやつらのシェイプを確認して、自分が指定した出力層の数になってたら、そのやつらには読み込ませない。

mbnet_pretrained_include = ["MobilenetV1"] mbnet_pretrained_exclude = ["MobilenetV1/Predictions", "MobilenetV1/Logits"] mbnet_pretrained_vars = tf.contrib.framework.get_variables_to_restore( include=mbnet_pretrained_include, exclude=mbnet_pretrained_exclude) mbnet_pretrained_saver = tf.train.Saver( mbnet_pretrained_vars, name="mobilenet_pretrained_saver")

やってみる

親

----------------------------------------------

EPOCH 30/30

100%|██████████| 72/72 [00:16<00:00, 4.35it/s]

100%|██████████| 54/54 [00:03<00:00, 13.75it/s]

loss: 0.0003 val accuracy: 0.8582

子

----------------------------------------------

EPOCH 72/300

100%|██████████| 72/72 [00:17<00:00, 4.01it/s]

100%|██████████| 54/54 [00:02<00:00, 23.67it/s]

loss: 6.1732 val accuracy: 0.4126

Movidius用に変換してみる

mvNCCompileすると

FailedPreconditionError (see above for traceback): Attempting to use uninitialized value MobilenetV1/Conv2d_0/BatchNorm/moving_mean

とのこと、

変換するときのSaverが保存する変数がtrainableのみになってたので、tf.global_variables()にしたところ、

NotFoundError: Key MobilenetV1/Conv2d_0/BatchNorm/moving_mean not found in checkpoint

ということは、根本的に保存した時点でこの変数が抜けていたようだ。

なので、おおもとのファイルでもtf.global_variables()にして保存した。

[Error 5] Toolkit Error: Stage Details Not Supported: Top Not Found preprocess/rescaled_inputs

[Error 5] Toolkit Error: Stage Details Not Supported: Top Not Found mbnet_struct/truediv

別のエラーになった。

divに対応していないのだろうか?

前者は事前に255で割って入力することにした。

後者は使ってなかった。

消して再実行

tokunn@nanase 9:00:09 [~/Documents/distil_incep2mbnet0921] $ python3 movidius.py /home/tokunn/caltech101/butterfly 2>/dev/null Image path : /home/tokunn/caltech101/butterfly/*.jpg or *.png ['image_0032.jpg', 'image_0073.jpg', 'image_0017.jpg', 'image_0089.jpg', 'image_0085.jpg', 'image_0078.jpg', 'image_0056.jpg', 'image_0069.jpg', 'image_0074.jpg', 'image_0025.jpg'] imgshape (91, 224, 224) Start predicting ... butterfly Faces butterfly chandelier butterfly Faces chandelier butterfly butterfly butterfly butterfly revolver butterfly butterfly revolver butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly chandelier butterfly butterfly butterfly butterfly butterfly butterfly butterfly sunflower revolver butterfly chandelier butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly chandelier butterfly butterfly butterfly Faces butterfly Faces butterfly butterfly butterfly Faces butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly chandelier cellphone butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly butterfly Time : 3.817107915878296 (91 images)

すばらしい!

ソースコード

テキトーなCNN編

親子

#!/usr/bin/env python # coding: utf-8 # In[1]: import os,time,glob os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim import tensorflow.contrib.slim.nets #from __future__ import print_function, division import loadimg_caltech as loadimg from tqdm import tqdm import matplotlib.pyplot as plt start = time.time() # In[2]: np_aryname = './models/data{0}.npy' try: # LOAD X_train = np.load(np_aryname.format('X_train')) Y_train = np.load(np_aryname.format('Y_train')) X_test = np.load(np_aryname.format('X_test')) Y_test = np.load(np_aryname.format('Y_test')) number_of_classes = np.asscalar(np.load(np_aryname.format('number_of_classes'))) except FileNotFoundError: print("### Load from Images ###") X_train, Y_train, X_test, Y_test, number_of_classes = loadimg.loadimg( '/home/tokunn/caltech101') np.save(np_aryname.format('X_train'), X_train) np.save(np_aryname.format('Y_train'), Y_train) np.save(np_aryname.format('X_test'), X_test) np.save(np_aryname.format('Y_test'), Y_test) np.save(np_aryname.format('number_of_classes'), number_of_classes) print("X_train", X_train.shape) print("Y_train", Y_train.shape) print("X_test", X_test.shape) print("Y_test", Y_test.shape) print("Number of Classes", number_of_classes) # In[3]: SNAPSHOT_FILE = "./models/snapshot.ckpt" STU_SNAPSHOT_FILE = "./models/student_snapshot.ckpt" PRETRAINED_SNAPSHOT_FILE = "./models/inception_v3.ckpt" # somewhere to store the tensorboard files - to visualise the graph TENSORBOARD_DIR = "logs" #[os.remove(i) for i in glob.glob(os.path.join(TENSORBOARD_DIR, '*.nanase'))] # IMAGE SETTINGS IMG_WIDTH, IMG_HEIGHT = [299,299] # Dimensions required by inception V3 N_CHANNELS = 3 # Number of channels required by inception V3 N_CLASSES = number_of_classes # Change N_CLASSES to suit your needs temperature = 4 # In[4]: def NetworkStudent(input,keep_prob_conv,keep_prob_hidden,scope='Student', reuse = False): with tf.variable_scope(scope, reuse = reuse) as sc: with slim.arg_scope([slim.conv2d], kernel_size = [3,3], stride = [1,1], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu): net = slim.conv2d(input, 32, scope='conv1') net = slim.max_pool2d(net,[2, 2], 2, scope='pool1') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 64,scope='conv2') net = slim.max_pool2d(net,[2, 2], 2, scope='pool2') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 128,scope='conv3') net = slim.max_pool2d(net,[2, 2], 2, scope='pool3') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 256,scope='conv4') net = slim.max_pool2d(net,[2, 2], 2, scope='pool4') net = tf.nn.dropout(net, keep_prob_conv) net = slim.flatten(net) with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu) : net = slim.fully_connected(net,1000,scope='fc1') # 625 net = tf.nn.dropout(net, keep_prob_hidden) net = slim.fully_connected(net,N_CLASSES,activation_fn=None,scope='fc2') #net = tf.nn.softmax(net/temperature) return net # In[5]: def loss(prediction,output):#,temperature = 1): cross_entropy = tf.reduce_mean(-tf.reduce_sum( tf.cast(output, tf.float32) * tf.log(tf.clip_by_value(prediction,1e-10,1.0)), reduction_indices=[1])) #correct_prediction = tf.equal(tf.argmax(prediction,1), tf.argmax(output,1)) #accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) return cross_entropy#,accuracy # In[6]: graph = tf.Graph() with graph.as_default(): # INPUTS with tf.name_scope("inputs") as scope: input_dims = (None, IMG_HEIGHT, IMG_WIDTH, N_CHANNELS) tf_X = tf.placeholder(tf.float32, shape=input_dims, name="X") tf_Y = tf.placeholder(tf.int32, shape=[None, N_CLASSES], name="Y") tf_alpha = tf.placeholder_with_default(0.001, shape=None, name="alpha") tf_is_training = tf.placeholder_with_default(False, shape=None, name="is_training") stu_keep_prob_conv = tf.placeholder(tf.float32) stu_keep_prob_hidden = tf.placeholder(tf.float32) # PREPROCESSING STEPS with tf.name_scope("preprocess") as scope: scaled_inputs = tf.div(tf_X, 255., name="rescaled_inputs") # BODY arg_scope = tf.contrib.slim.nets.inception.inception_v3_arg_scope() with tf.contrib.framework.arg_scope(arg_scope): tf_logits, end_points = tf.contrib.slim.nets.inception.inception_v3( scaled_inputs, num_classes=N_CLASSES, is_training=tf_is_training, dropout_keep_prob=0.8) with tf.name_scope("softmax") as scope: tch_y = tf.nn.softmax(tf_logits/temperature, name="teacher_softmax") tch_y_actual = tf.nn.softmax(tf_logits, name="teacher_softmax_actual") # Student stu_logits = NetworkStudent(tf_X, stu_keep_prob_conv, stu_keep_prob_hidden, scope='student') with tf.name_scope("stu_struct"): # softmax stu_y = tf.nn.softmax(stu_logits/temperature, name="softmax") stu_y_actual = tf.nn.softmax(stu_logits, name="actual_softmax") # Seperate vars model_vars = tf.trainable_variables() var_teacher = [var for var in model_vars if 'InceptionV3' in var.name] var_student = [var for var in model_vars if 'student' in var.name] # PREDICTIONS tf_preds = tf.to_int32(tf.argmax(tf_logits, axis=-1), name="preds") # LOSS - Sums all losses (even Regularization losses) with tf.variable_scope('loss') as scope: #unrolled_labels = tf.reshape(tf_Y, (-1,)) #tf.losses.softmax_cross_entropy(onehot_labels=unrolled_labels, tf.losses.softmax_cross_entropy(onehot_labels=tf_Y, logits=tf_logits) tf_loss = tf.losses.get_total_loss() #tf_loss = loss(tch_y_actual, tf_Y) # OPTIMIZATION - Also updates batchnorm operations automatically with tf.variable_scope('opt') as scope: #tf_optimizer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") #update_ops = tf.get_collection(tf.GraphKeys.UPDATE_OPS) # for batchnorm #with tf.control_dependencies(update_ops): # tf_train_op = tf_optimizer.minimize(tf_loss, name="train_op") grad_teacher = tf.gradients(tf_loss, var_teacher) tf_trainer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") tf_train_step = tf_trainer.apply_gradients(zip(grad_teacher, var_teacher)) # Evaluation with tf.variable_scope('eval') as scope: y = tf.nn.softmax(tf_logits, name='softmax') accuracy = tf.reduce_mean( tf.cast(tf.equal(tf.argmax(y, 1), tf.argmax(tf_Y, 1)), tf.float32) ) # PRETRAINED SAVER SETTINGS # Lists of scopes of weights to include/exclude from pretrained snapshot pretrained_include = ["InceptionV3"] pretrained_exclude = ["InceptionV3/AuxLogits", "InceptionV3/Logits"] # PRETRAINED SAVER - For loading pretrained weights on the first run pretrained_vars = tf.contrib.framework.get_variables_to_restore( include=pretrained_include, exclude=pretrained_exclude) tf_pretrained_saver = tf.train.Saver(pretrained_vars, name="pretrained_saver") # Student with tf.name_scope("stu_train"): # loss tf.losses.softmax_cross_entropy(onehot_labels=tf_Y, logits=stu_logits) stu_loss1 = tf.losses.get_total_loss() #stu_loss1 = loss(stu_y_actual, tf_Y) stu_loss2 = tf.reduce_mean(- tf.reduce_sum(tch_y * tf.log( tf.clip_by_value(stu_y, 1e-10,1.0)), reduction_indices=1)) stu_loss = 0.2 * stu_loss1 + stu_loss2 #stu_loss = stu_loss1 # optimization grad_student = tf.gradients(stu_loss,var_student) stu_trainer = tf.train.RMSPropOptimizer(learning_rate = 0.0002) #stu_trainer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") #stu_trainer = tf.train.AdadeltaOptimizer() train_step_student = stu_trainer.apply_gradients(zip(grad_student, var_student)) #stu_optimizer = tf.train.AdamOptimizer(tf_alpha, name="stu_optimizer") #stu_train_op = tf_optimizer.minimize(stu_loss, name="stu_train_op") # evaluation stu_accuracy = tf.reduce_mean( tf.cast(tf.equal(tf.argmax(stu_y_actual, 1), tf.argmax(tf_Y, 1)), tf.float32) ) # MAIN SAVER - For saving/restoring your complete model tf_saver = tf.train.Saver(var_teacher, name="saver") # STUDENT SAVER stu_saver = tf.train.Saver(var_student, name="stu_saver") # TENSORBOARD - To visialize the architecture with tf.variable_scope('tensorboard') as scope: tf_summary_writer = tf.summary.FileWriter(TENSORBOARD_DIR, graph=graph) tf_dummy_summary = tf.summary.scalar(name="dummy", tensor=1) # In[7]: def initialize_vars(session): # INITIALIZE VARS LOAD_FROM_CHECKPOINT = False if LOAD_FROM_CHECKPOINT: #tf.train.checkpoint_exists(SNAPSHOT_FILE): print(" Loading from Main Checkpoint") session.run(tf.global_variables_initializer()) tf_saver.restore(session, SNAPSHOT_FILE) else: print("Initializing from Pretrained Weights") session.run(tf.global_variables_initializer()) tf_pretrained_saver.restore(session, PRETRAINED_SNAPSHOT_FILE) # In[ ]: with tf.Session(graph=graph) as sess: n_epochs = 5 batch_size = 32 # small batch size so inception v3 can be run on laptops steps_per_epoch = len(X_train)//batch_size // 3 # FOR DEBUG steps_per_epoch_val = len(X_test)//batch_size initialize_vars(session=sess) print("##### Teacher Training Section #####") for epoch in range(n_epochs): print("----------------------------------------------", flush=True) print("EPOCH {}/{}".format(epoch+1, n_epochs), flush=True, end=' ') ## TRAINING for step in tqdm(range(steps_per_epoch)): # EXTRACT A BATCH OF TRAINING DATA X_batch = X_train[batch_size*step: batch_size*(step+1)] Y_batch = Y_train[batch_size*step: batch_size*(step+1)] # RUN ONE TRAINING STEP - feeding batch of data feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: True} #loss, _ = sess.run([tf_loss, tf_train_op], feed_dict=feed_dict) tf_train_step.run(feed_dict=feed_dict) ## EVALUATE val_accuracy = [] for step in tqdm(range(steps_per_epoch_val)): # EXTRACT A BATCH OF TEST DATA X_batch = X_test[batch_size*step: batch_size*(step+1)] Y_batch = Y_test[batch_size*step: batch_size*(step+1)] # Evalution feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: False} val_accuracy.append(accuracy.eval(feed_dict=feed_dict)) # PRINT FEED BACK - once every `print_every` steps total_val_accuracy = np.average(np.asarray(val_accuracy)) pre_logits, pre_loss = sess.run([tch_y, tf_loss], feed_dict = { tf_X: [X_test[5]], tf_Y: [Y_test[5]], tf_is_training: False }) print("\tloss: {:0.4f} val accuracy: {:0.4f}".format(pre_loss, total_val_accuracy)) plt.plot(pre_logits[0]) plt.show() # SAVE SNAPSHOT - after each epoch tf_saver.save(sess, SNAPSHOT_FILE) print("### Student Training Section ###") n_epochs = 300 steps_per_epoch = len(X_train)//batch_size // 3 # FOR DEBUG steps_per_epoch_val = len(X_test)//batch_size for epoch in range(n_epochs): print("----------------------------------------------", flush=True) print("EPOCH {}/{}".format(epoch+1, n_epochs), flush=True, end=' ') ## TRAINING for step in tqdm(range(steps_per_epoch)): # EXTRACT A BATCH OF TRAINING DATA X_batch = X_train[batch_size*step: batch_size*(step+1)] Y_batch = Y_train[batch_size*step: batch_size*(step+1)] # RUN ONE TRAINING STEP - feeding batch of data feed_dict = {tf_X: X_batch, tf_Y: Y_batch, #tf_alpha:0.001, stu_keep_prob_conv: 0.8, stu_keep_prob_hidden: 0.5} #loss, _ = sess.run(stu_loss, feed_dict=feed_dict) train_step_student.run(feed_dict=feed_dict) ## EVALUATE val_accuracy = [] for step in tqdm(range(steps_per_epoch_val)): # EXTRACT A BATCH OF TEST DATA X_batch = X_test[batch_size*step: batch_size*(step+1)] Y_batch = Y_test[batch_size*step: batch_size*(step+1)] # Evalution feed_dict = {tf_X: X_batch, tf_Y: Y_batch, #tf_alpha:0.001, stu_keep_prob_conv: 1.0, stu_keep_prob_hidden: 1.0} val_accuracy.append(stu_accuracy.eval(feed_dict=feed_dict)) # PRINT FEED BACK - once every `print_every` steps total_val_accuracy = np.average(np.asarray(val_accuracy)) pre_logits, pre_loss = sess.run([stu_logits, stu_loss], feed_dict = { tf_X: X_batch, tf_Y: Y_batch, #tf_alpha:0.001, stu_keep_prob_conv: 1.0, stu_keep_prob_hidden: 1.0 }) print("\tloss: {:0.4f} val accuracy: {:0.4f}".format(pre_loss, total_val_accuracy)) plt.plot(pre_logits[0]) plt.show() stu_saver.save(sess, STU_SNAPSHOT_FILE) # In[ ]: end = time.time() print("Time : {0}".format(end-start)) # In[ ]: # In[ ]:

変換用

#!/usr/bin/env python # coding: utf-8 # In[1]: import os,time,glob os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim # In[2]: STU_SNAPSHOT_FILE = "./models/student_snapshot.ckpt" STU_FLOZEN_FILE = "./models/student_flozen.ckpt" # somewhere to store the tensorboard files - to visualise the graph TENSORBOARD_DIR = "logs" [os.remove(i) for i in glob.glob(os.path.join(TENSORBOARD_DIR, '*.nanase'))] # IMAGE SETTINGS IMG_WIDTH, IMG_HEIGHT = [299,299] # Dimensions required by inception V3 N_CHANNELS = 3 # Number of channels required by inception V3 N_CLASSES = 101 # Change N_CLASSES to suit your needs temperature = 4 # In[3]: def NetworkStudent(input,scope='Student', reuse = False): with tf.variable_scope(scope, reuse = reuse) as sc: with slim.arg_scope([slim.conv2d], kernel_size = [3,3], stride = [1,1], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu): net = slim.conv2d(input, 32, scope='conv1') net = slim.max_pool2d(net,[2, 2], 2, scope='pool1') net = slim.conv2d(net, 64,scope='conv2') net = slim.max_pool2d(net,[2, 2], 2, scope='pool2') net = slim.conv2d(net, 128,scope='conv3') net = slim.max_pool2d(net,[2, 2], 2, scope='pool3') net = slim.conv2d(net, 256,scope='conv4') net = slim.max_pool2d(net,[2, 2], 2, scope='pool4') net = slim.flatten(net) with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu) : net = slim.fully_connected(net,1000,scope='fc1') # 625 net = slim.fully_connected(net,N_CLASSES,activation_fn=None,scope='fc2') #net = tf.nn.softmax(net/temperature) return net # In[4]: graph = tf.Graph() with graph.as_default(): # INPUTS with tf.name_scope("inputs") as scope: input_dims = (None, IMG_HEIGHT, IMG_WIDTH, N_CHANNELS) tf_X = tf.placeholder(tf.float32, shape=input_dims, name="X") # PREPROCESSING STEPS with tf.name_scope("preprocess") as scope: scaled_inputs = tf.div(tf_X, 255., name="rescaled_inputs") # Student stu_logits = NetworkStudent(tf_X, scope='student') with tf.name_scope("stu_struct"): # softmax stu_y = tf.nn.softmax(stu_logits/temperature, name="softmax") stu_y_actual = tf.nn.softmax(stu_logits, name="actual_softmax") # Seperate vars model_vars = tf.trainable_variables() var_student = [var for var in model_vars if 'student' in var.name] # parameter total_parameters = 0 for variable in tf.trainable_variables(): # shape is an array of tf.Dimension shape = variable.get_shape() #print(shape) #print(len(shape)) variable_parameters = 1 for dim in shape: #print(dim) variable_parameters *= dim.value #print(variable_parameters) total_parameters += variable_parameters print("total params: ",total_parameters) # STUDENT SAVER stu_saver = tf.train.Saver(var_student, name="stu_saver") # TENSORBOARD - To visialize the architecture with tf.variable_scope('tensorboard') as scope: tf_summary_writer = tf.summary.FileWriter(TENSORBOARD_DIR, graph=graph) tf_dummy_summary = tf.summary.scalar(name="dummy", tensor=1) # In[5]: with tf.Session(graph=graph) as sess: sess.run(tf.global_variables_initializer()) sess.run(tf.local_variables_initializer()) stu_saver.restore(sess, STU_SNAPSHOT_FILE) stu_saver.save(sess, STU_FLOZEN_FILE) # In[ ]: # In[ ]:

Movidiusでの予測用

import mvnc.mvncapi as mvnc import numpy as np from PIL import Image import cv2 import time, sys, os import glob IMAGE_DIR_NAME = '/home/tokunn/caltech101' if (len(sys.argv) > 1): IMAGE_DIR_NAME = sys.argv[1] #IMAGE_DIR_NAME = 'github_deep_mnist/ncappzoo/data/digit_images' def predict(input): print("Start prediting ...") devices = mvnc.EnumerateDevices() device = mvnc.Device(devices[0]) device.OpenDevice() # Load graph file data with open('./models/graph', 'rb') as f: graph_file_buffer = f.read() # Initialize a Graph object graph = device.AllocateGraph(graph_file_buffer) start = time.time() for i in range(len(input)): # Write the tensor to the input_fifo and queue an inference graph.LoadTensor(input[i], None) output, userobj = graph.GetResult() print(np.argmax(output), end=' ') stop = time.time() print('') print("Time : {0} ({1} images)".format(stop-start, len(input))) graph.DeallocateGraph() device.CloseDevice() return output if __name__ == '__main__': print("Image path : {0}".format(os.path.join(IMAGE_DIR_NAME, '*.jpg or *.png'))) jpg_list = glob.glob(os.path.join(IMAGE_DIR_NAME, '*.jpg')) jpg_list += glob.glob(os.path.join(IMAGE_DIR_NAME, '*.png')) if not len(jpg_list): print("No image file") sys.exit() jpg_list.reverse() print([i.split('/')[-1] for i in jpg_list][:10]) img_list = [] for n in jpg_list: image = cv2.imread(n) image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) image = cv2.resize(image, (299, 299)) img_list.append(image) img_list = np.asarray(img_list)# * (1.0/255.0) #img_list = np.reshape(img_list, [-1, 784]) print("imgshape ", img_list.shape) predict(img_list.astype(np.float16))

InceptionV3をMobilenetV1へ編

親子

#!/usr/bin/env python # coding: utf-8 # In[1]: import os,time,glob,sys #sys.path.append('/home/tokunn/sources/models/research/slim') os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim import tensorflow.contrib.slim.nets import nets.mobilenet_v1 #from __future__ import print_function, division import loadimg_caltech as loadimg from tqdm import tqdm import matplotlib.pyplot as plt start = time.time() # In[2]: np_aryname = './models/data{0}.npy' try: # LOAD X_train = np.load(np_aryname.format('X_train')) Y_train = np.load(np_aryname.format('Y_train')) X_test = np.load(np_aryname.format('X_test')) Y_test = np.load(np_aryname.format('Y_test')) number_of_classes = np.asscalar(np.load(np_aryname.format('number_of_classes'))) except FileNotFoundError: print("### Load from Images ###") X_train, Y_train, X_test, Y_test, number_of_classes = loadimg.loadimg( '/home/tokunn/caltech101') np.save(np_aryname.format('X_train'), X_train) np.save(np_aryname.format('Y_train'), Y_train) np.save(np_aryname.format('X_test'), X_test) np.save(np_aryname.format('Y_test'), Y_test) np.save(np_aryname.format('number_of_classes'), number_of_classes) print("X_train", X_train.shape) print("Y_train", Y_train.shape) print("X_test", X_test.shape) print("Y_test", Y_test.shape) print("Number of Classes", number_of_classes) # In[3]: SNAPSHOT_FILE = "./models/snapshot.ckpt" #STU_SNAPSHOT_FILE = "./models/student_snapshot.ckpt" MBNET_SNAPSHOT_FILE = "./models/mbnet_student_snapshot.ckpt" PRETRAINED_SNAPSHOT_FILE = "./models/inception_v3.ckpt" PRETRAINED_MOBILENET_FILE = "./models/mobilenet/mobilenet_v1_1.0_224.ckpt" # somewhere to store the tensorboard files - to visualise the graph TENSORBOARD_DIR = "logs" [os.remove(i) for i in glob.glob(os.path.join(TENSORBOARD_DIR, '*.nanase'))] # IMAGE SETTINGS IMG_WIDTH, IMG_HEIGHT = [224,224] # Dimensions required by inception V3 N_CHANNELS = 3 # Number of channels required by inception V3 N_CLASSES = number_of_classes # Change N_CLASSES to suit your needs temperature = 20 # In[4]: def NetworkStudent2(input,scope='Student', tf_is_training=False, reuse = False): #with tf.variable_scope(scope, reuse = reuse) as sc: arg_scope = nets.mobilenet_v1.mobilenet_v1_arg_scope() with tf.contrib.framework.arg_scope(arg_scope): stu2_logits, stu2_end_points = nets.mobilenet_v1.mobilenet_v1( scaled_inputs, num_classes=N_CLASSES, is_training=tf_is_training)#, #depth_multiplier=1.0) return stu2_logits, stu2_end_points # In[5]: # def NetworkStudent(input,keep_prob_conv,keep_prob_hidden,scope='Student', reuse = False): # with tf.variable_scope(scope, reuse = reuse) as sc: # with slim.arg_scope([slim.conv2d], # kernel_size = [3,3], # stride = [1,1], # biases_initializer=tf.constant_initializer(0.0), # activation_fn=tf.nn.relu): # net = slim.conv2d(input, 32, scope='conv1') # net = slim.max_pool2d(net,[2, 2], 2, scope='pool1') # net = tf.nn.dropout(net, keep_prob_conv) # net = slim.conv2d(net, 64,scope='conv2') # net = slim.max_pool2d(net,[2, 2], 2, scope='pool2') # net = tf.nn.dropout(net, keep_prob_conv) # net = slim.conv2d(net, 128,scope='conv3') # net = slim.max_pool2d(net,[2, 2], 2, scope='pool3') # net = tf.nn.dropout(net, keep_prob_conv) # net = slim.conv2d(net, 256,scope='conv4') # net = slim.max_pool2d(net,[2, 2], 2, scope='pool4') # net = tf.nn.dropout(net, keep_prob_conv) # net = slim.flatten(net) # with slim.arg_scope([slim.fully_connected], # biases_initializer=tf.constant_initializer(0.0), # activation_fn=tf.nn.relu) : # net = slim.fully_connected(net,1000,scope='fc1') # 625 # net = tf.nn.dropout(net, keep_prob_hidden) # net = slim.fully_connected(net,N_CLASSES,activation_fn=None,scope='fc2') # #net = tf.nn.softmax(net/temperature) # return net # In[6]: def loss(prediction,output):#,temperature = 1): cross_entropy = tf.reduce_mean(-tf.reduce_sum( tf.cast(output, tf.float32) * tf.log(tf.clip_by_value(prediction,1e-10,1.0)), reduction_indices=[1])) #correct_prediction = tf.equal(tf.argmax(prediction,1), tf.argmax(output,1)) #accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) return cross_entropy#,accuracy # In[7]: graph = tf.Graph() with graph.as_default(): # INPUTS with tf.name_scope("inputs") as scope: input_dims = (None, IMG_HEIGHT, IMG_WIDTH, N_CHANNELS) tf_X = tf.placeholder(tf.float32, shape=input_dims, name="X") tf_Y = tf.placeholder(tf.int32, shape=[None, N_CLASSES], name="Y") tf_alpha = tf.placeholder_with_default(0.001, shape=None, name="alpha") tf_is_training = tf.placeholder_with_default(False, shape=None, name="is_training") stu_keep_prob_conv = tf.placeholder(tf.float32) stu_keep_prob_hidden = tf.placeholder(tf.float32) # PREPROCESSING STEPS with tf.name_scope("preprocess") as scope: #scaled_inputs = tf.div(tf_X, 255., name="rescaled_inputs") scaled_inputs = tf_X # BODY arg_scope = tf.contrib.slim.nets.inception.inception_v3_arg_scope() with tf.contrib.framework.arg_scope(arg_scope): tf_logits, end_points = tf.contrib.slim.nets.inception.inception_v3( scaled_inputs, num_classes=N_CLASSES, is_training=tf_is_training, dropout_keep_prob=0.8) with tf.name_scope("softmax") as scope: tch_y = tf.nn.softmax(tf_logits/temperature, name="teacher_softmax") tch_y_actual = tf.nn.softmax(tf_logits, name="teacher_softmax_actual") # Student # stu_logits = NetworkStudent(scaled_inputs, stu_keep_prob_conv, # stu_keep_prob_hidden, scope='student') # with tf.name_scope("stu_struct"): # # softmax # stu_y = tf.nn.softmax(stu_logits/temperature, name="softmax") # stu_y_actual = tf.nn.softmax(stu_logits, name="actual_softmax") mbnet_logits, mbnet_end_point = NetworkStudent2( scaled_inputs, tf_is_training=tf_is_training, scope='mbnet') with tf.name_scope("mbnet_struct"): # softmax mbnet_y = tf.nn.softmax(mbnet_logits/temperature, name="softmax") mbnet_y_actual = tf.nn.softmax(mbnet_logits, name="actual_softmax") # Seperate vars model_vars = tf.trainable_variables() var_teacher = [var for var in model_vars if 'InceptionV3' in var.name] #var_student = [var for var in model_vars if 'student' in var.name] save_vars = tf.global_variables() var_mbnet = [var for var in save_vars if 'MobilenetV1' in var.name] # PREDICTIONS tf_preds = tf.to_int32(tf.argmax(tf_logits, axis=-1), name="preds") # LOSS - Sums all losses (even Regularization losses) with tf.variable_scope('loss') as scope: #unrolled_labels = tf.reshape(tf_Y, (-1,)) #tf.losses.softmax_cross_entropy(onehot_labels=unrolled_labels, #tf.losses.softmax_cross_entropy(onehot_labels=tf_Y, logits=tf_logits) #tf_loss = tf.losses.get_total_loss() tf_loss = loss(tch_y_actual, tf_Y) # OPTIMIZATION - Also updates batchnorm operations automatically with tf.variable_scope('opt') as scope: #tf_optimizer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") #update_ops = tf.get_collection(tf.GraphKeys.UPDATE_OPS) # for batchnorm #with tf.control_dependencies(update_ops): # tf_train_op = tf_optimizer.minimize(tf_loss, name="train_op") grad_teacher = tf.gradients(tf_loss, var_teacher) tf_trainer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") tf_train_step = tf_trainer.apply_gradients(zip(grad_teacher, var_teacher)) # Evaluation with tf.variable_scope('eval') as scope: y = tf.nn.softmax(tf_logits, name='softmax') accuracy = tf.reduce_mean( tf.cast(tf.equal(tf.argmax(y, 1), tf.argmax(tf_Y, 1)), tf.float32) ) # PRETRAINED SAVER SETTINGS # Lists of scopes of weights to include/exclude from pretrained snapshot pretrained_include = ["InceptionV3"] pretrained_exclude = ["InceptionV3/AuxLogits", "InceptionV3/Logits"] # PRETRAINED SAVER - For loading pretrained weights on the first run pretrained_vars = tf.contrib.framework.get_variables_to_restore( include=pretrained_include, exclude=pretrained_exclude) tf_pretrained_saver = tf.train.Saver(pretrained_vars, name="pretrained_saver") mbnet_pretrained_include = ["MobilenetV1"] mbnet_pretrained_exclude = ["MobilenetV1/Predictions", "MobilenetV1/Logits"] mbnet_pretrained_vars = tf.contrib.framework.get_variables_to_restore( include=mbnet_pretrained_include, exclude=mbnet_pretrained_exclude) mbnet_pretrained_saver = tf.train.Saver( mbnet_pretrained_vars, name="mobilenet_pretrained_saver") # Student # with tf.name_scope("stu_train"): # # loss # #tf.losses.softmax_cross_entropy(onehot_labels=tf_Y, logits=stu_logits) # #stu_loss1 = tf.losses.get_total_loss() # stu_loss1 = loss(stu_y_actual, tf_Y) # stu_loss2 = tf.reduce_mean(- tf.reduce_sum(tch_y * tf.log( # tf.clip_by_value(stu_y, 1e-10,1.0)), reduction_indices=1)) # stu_loss = 0.4 * stu_loss1 + stu_loss2 # #stu_loss = stu_loss1 # # optimization # grad_student = tf.gradients(stu_loss,var_student) # stu_trainer = tf.train.RMSPropOptimizer(learning_rate = 0.0002) # #stu_trainer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") # #stu_trainer = tf.train.AdadeltaOptimizer() # train_step_student = stu_trainer.apply_gradients(zip(grad_student, var_student)) # #stu_optimizer = tf.train.AdamOptimizer(tf_alpha, name="stu_optimizer") # #stu_train_op = tf_optimizer.minimize(stu_loss, name="stu_train_op") # # evaluation # stu_accuracy = tf.reduce_mean( # tf.cast(tf.equal(tf.argmax(stu_y_actual, 1), tf.argmax(tf_Y, 1)), tf.float32) # ) # Mobilenet V1 with tf.name_scope("mbnet_train"): # loss #tf.losses.softmax_cross_entropy(onehot_labels=tf_Y, logits=mbnet_logits) #mbnet_loss1 = tf.losses.get_total_loss() mbnet_loss1 = loss(mbnet_y_actual, tf_Y) mbnet_loss2 = tf.reduce_mean(- tf.reduce_sum(tch_y * tf.log( tf.clip_by_value(mbnet_y, 1e-10,1.0)), reduction_indices=1)) mbnet_loss = 0.4 * mbnet_loss1 + mbnet_loss2 #mbnet_loss = mbnet_loss1 # optimization grad_mbnet = tf.gradients(mbnet_loss,var_mbnet) mbnet_trainer = tf.train.RMSPropOptimizer(learning_rate = 0.0002) train_step_mbnet = mbnet_trainer.apply_gradients(zip(grad_mbnet, var_mbnet)) # evaluation mbnet_accuracy = tf.reduce_mean( tf.cast(tf.equal( tf.argmax(mbnet_y_actual, 1), tf.argmax(tf_Y, 1)), tf.float32) ) # MAIN SAVER - For saving/restoring your complete model tf_saver = tf.train.Saver(var_teacher, name="saver") # STUDENT SAVER #stu_saver = tf.train.Saver(var_student, name="stu_saver") mbnet_saver = tf.train.Saver(var_mbnet, name="mbnet_saver") # TENSORBOARD - To visialize the architecture with tf.variable_scope('tensorboard') as scope: tf_summary_writer = tf.summary.FileWriter(TENSORBOARD_DIR, graph=graph) tf_dummy_summary = tf.summary.scalar(name="dummy", tensor=1) # In[8]: def initialize_vars(session): # INITIALIZE VARS LOAD_FROM_CHECKPOINT = False if LOAD_FROM_CHECKPOINT: #tf.train.checkpoint_exists(SNAPSHOT_FILE): print(" Loading from Main Checkpoint") session.run(tf.global_variables_initializer()) tf_saver.restore(session, SNAPSHOT_FILE) else: print("Initializing from Pretrained Weights") session.run(tf.global_variables_initializer()) tf_pretrained_saver.restore(session, PRETRAINED_SNAPSHOT_FILE) mbnet_pretrained_saver.restore(session, PRETRAINED_MOBILENET_FILE) # In[9]: with tf.Session(graph=graph) as sess: n_epochs = 2 batch_size = 32 # small batch size so inception v3 can be run on laptops steps_per_epoch = len(X_train)//batch_size steps_per_epoch_val = len(X_test)//batch_size initialize_vars(session=sess) """ try: print("#### Debuggin Section ####") ep2 = sess.run(stu2_end_point, feed_dict = {tf_X: [X_train[0]], tf_Y: [Y_train[0]], tf_is_training: True}) print("EP2 : ", ep2.keys()) print("shape of logits: ", ep2['Logits'].shape) print("shape of prediction: ", ep2['Predictions'].shape) #print("pretrained_vars: ", mbnet_pretrained_vars)""" print("##### Teacher Training Section #####") for epoch in range(n_epochs): print("----------------------------------------------", flush=True) print("EPOCH {}/{}".format(epoch+1, n_epochs), flush=True, end=' ') ## TRAINING for step in tqdm(range(steps_per_epoch)): # EXTRACT A BATCH OF TRAINING DATA X_batch = X_train[batch_size*step: batch_size*(step+1)] Y_batch = Y_train[batch_size*step: batch_size*(step+1)] # RUN ONE TRAINING STEP - feeding batch of data feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: True} #loss, _ = sess.run([tf_loss, tf_train_op], feed_dict=feed_dict) tf_train_step.run(feed_dict=feed_dict) ## EVALUATE val_accuracy = [] for step in tqdm(range(steps_per_epoch_val)): # EXTRACT A BATCH OF TEST DATA X_batch = X_test[batch_size*step: batch_size*(step+1)] Y_batch = Y_test[batch_size*step: batch_size*(step+1)] # Evalution feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: False} val_accuracy.append(accuracy.eval(feed_dict=feed_dict)) # PRINT FEED BACK - once every `print_every` steps total_val_accuracy = np.average(np.asarray(val_accuracy)) pre_logits, pre_loss = sess.run([tch_y, tf_loss], feed_dict = { tf_X: [X_test[5]], tf_Y: [Y_test[5]], tf_is_training: False }) print("\tloss: {:0.4f} val accuracy: {:0.4f}".format(pre_loss, total_val_accuracy)) plt.plot(pre_logits[0]) plt.show() # SAVE SNAPSHOT - after each epoch tf_saver.save(sess, SNAPSHOT_FILE) print("### Student Training Section ###") n_epochs = 30 steps_per_epoch = len(X_train)//batch_size // 3 # FOR DEBUG steps_per_epoch_val = len(X_test)//batch_size print("/////////////////////////////////////////////////////////") for epoch in range(n_epochs): print("----------------------------------------------", flush=True) print("EPOCH {}/{}".format(epoch+1, n_epochs), flush=True, end=' ') ## TRAINING for step in tqdm(range(steps_per_epoch)): # EXTRACT A BATCH OF TRAINING DATA X_batch = X_train[batch_size*step: batch_size*(step+1)] Y_batch = Y_train[batch_size*step: batch_size*(step+1)] # RUN ONE TRAINING STEP - feeding batch of data feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_is_training: True} train_step_mbnet.run(feed_dict=feed_dict) ## EVALUATE val_accuracy = [] for step in tqdm(range(steps_per_epoch_val)): # EXTRACT A BATCH OF TEST DATA X_batch = X_test[batch_size*step: batch_size*(step+1)] Y_batch = Y_test[batch_size*step: batch_size*(step+1)] # Evalution feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_is_training: False} val_accuracy.append(mbnet_accuracy.eval(feed_dict=feed_dict)) # PRINT FEED BACK - once every `print_every` steps total_val_accuracy = np.average(np.asarray(val_accuracy)) pre_logits, pre_loss = sess.run([mbnet_logits, mbnet_loss], feed_dict = { tf_X: X_batch, tf_Y: Y_batch, tf_is_training: False }) print("\tloss: {:0.4f} val accuracy: {:0.4f}".format(pre_loss, total_val_accuracy)) plt.plot(pre_logits[0]) plt.show() mbnet_saver.save(sess, MBNET_SNAPSHOT_FILE) # In[10]: end = time.time() print("Time : {0}".format(end-start)) # In[ ]: # In[ ]:

変換用

#!/usr/bin/env python # coding: utf-8 # In[1]: import os,time,glob,sys os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim import tensorflow.contrib.slim.nets import nets.mobilenet_v1 # In[2]: MBNET_SNAPSHOT_FILE = "./models/mbnet_student_snapshot.ckpt" MBNET_FLOZEN_FILE = "./models/mbnet_flozen.ckpt" # somewhere to store the tensorboard files - to visualise the graph TENSORBOARD_DIR = "logs" [os.remove(i) for i in glob.glob(os.path.join(TENSORBOARD_DIR, '*.nanase'))] # IMAGE SETTINGS IMG_WIDTH, IMG_HEIGHT = [224,224] # Dimensions required by inception V3 N_CHANNELS = 3 # Number of channels required by inception V3 N_CLASSES = 101 # Change N_CLASSES to suit your needs temperature = 20 # In[3]: def NetworkStudent2(input,scope='Student', tf_is_training=False, reuse = False): #with tf.variable_scope(scope, reuse = reuse) as sc: arg_scope = nets.mobilenet_v1.mobilenet_v1_arg_scope() with tf.contrib.framework.arg_scope(arg_scope): stu2_logits, stu2_end_points = nets.mobilenet_v1.mobilenet_v1( scaled_inputs, num_classes=N_CLASSES, is_training=False)#, #depth_multiplier=1.0) return stu2_logits, stu2_end_points # In[4]: graph = tf.Graph() with graph.as_default(): # INPUTS with tf.name_scope("inputs") as scope: input_dims = (None, IMG_HEIGHT, IMG_WIDTH, N_CHANNELS) tf_X = tf.placeholder(tf.float32, shape=input_dims, name="X") # PREPROCESSING STEPS with tf.name_scope("preprocess") as scope: #scaled_inputs = tf.div(tf_X, 255., name="rescaled_inputs") scaled_inputs = tf_X # Student mbnet_logits, mbnet_end_point = NetworkStudent2(scaled_inputs, scope='mbnet') with tf.name_scope("mbnet_struct"): # softmax mbnet_y_actual = tf.nn.softmax(mbnet_logits, name="actual_softmax") # Seperate vars model_vars = tf.trainable_variables() var_mbnet = [var for var in model_vars if 'MobilenetV1' in var.name] # parameter total_parameters = 0 for variable in tf.trainable_variables(): # shape is an array of tf.Dimension shape = variable.get_shape() #print(shape) #print(len(shape)) variable_parameters = 1 for dim in shape: #print(dim) variable_parameters *= dim.value #print(variable_parameters) total_parameters += variable_parameters print("total params: ",total_parameters) # STUDENT SAVER #mbnet_saver = tf.train.Saver(var_mbnet, name="mbnet_saver") mbnet_saver = tf.train.Saver(tf.global_variables(), name="mbnet_saver") # TENSORBOARD - To visialize the architecture with tf.variable_scope('tensorboard') as scope: tf_summary_writer = tf.summary.FileWriter(TENSORBOARD_DIR, graph=graph) tf_dummy_summary = tf.summary.scalar(name="dummy", tensor=1) # In[5]: with tf.Session(graph=graph) as sess: sess.run(tf.global_variables_initializer()) sess.run(tf.local_variables_initializer()) mbnet_saver.restore(sess, MBNET_SNAPSHOT_FILE) #sess.run(tf.initialize_all_variables()) mbnet_saver.save(sess, MBNET_FLOZEN_FILE)

loadimg

#!/usr/bin/env python2 import os import numpy as np import tensorflow as tf from keras.preprocessing.image import load_img, img_to_array from keras.utils import np_utils import matplotlib.pyplot as plt import glob from sklearn.model_selection import train_test_split IMGSIZE = 224 IMGSIZE = 224 def loadimg_one(DIRPATH, NUM): x = [] y = [] img_list = os.listdir(DIRPATH) img_list = sorted(img_list) if (NUM) and (len(img_list) > NUM): img_list = img_list[:NUM] #print("[loadimg] : img_list : ", end=' ') #print(img_list) with open('categories.txt', 'w') as f: f.write('\n'.join(img_list)) f.write('\n') img_count = 0 for number in img_list: dirpath = os.path.join(DIRPATH, number) dirpic_list = glob.glob(os.path.join(dirpath, '*.jpg')) dirpic_list += glob.glob(os.path.join(dirpath, '*.png')) for picture in dirpic_list: #img = img_to_array(load_img(picture, color_mode = "grayscale", target_size=(IMGSIZE, IMGSIZE))) img = img_to_array(load_img(picture, target_size=(IMGSIZE, IMGSIZE))) x.append(img) y.append(img_count) #print("Load {0} : {1}".format(picture, img_count)) img_count += 1 output_count = img_count x = np.asarray(x) x = x.astype('float32') x = x/255.0 y = np.asarray(y, dtype=np.int32) y = np_utils.to_categorical(y, output_count) return x, y, output_count def loadimg(COMMONDIR='./', NUM=None): print("########## loadimg ########") #COMMONDIR = './make_image' #TRAINDIR = os.path.join(COMMONDIR, 'train') #TESTDIR = os.path.join(COMMONDIR, 'test') x, y, class_count = loadimg_one(COMMONDIR, NUM) #x_test, y_test, _ = loadimg_one(TESTDIR, NUM) #for i in range(0, x_test.shape[0]): # plt.imshow(x_test[i]) # plt.show() #x = np.concatenate((x_train, x_test)) #x = np.reshape(x, [-1, 784]) #y = np.concatenate((y_train, y_test)) print("x_train, y_train, x_test, y_test, class_count") print("x_train shape : ", x.shape) print("########## END of loadimg ########") x_train, x_test, y_train, y_test = train_test_split(x, y,train_size=0.8, test_size=0.2) return x_train, y_train, x_test, y_test, class_count if __name__ == '__main__': loadimg()

Movidius

import mvnc.mvncapi as mvnc import numpy as np from PIL import Image import cv2 import time, sys, os import glob IMAGE_DIR_NAME = '/home/tokunn/caltech101' if (len(sys.argv) > 1): IMAGE_DIR_NAME = sys.argv[1] #IMAGE_DIR_NAME = 'github_deep_mnist/ncappzoo/data/digit_images' CATEGORIES_FILE = './categories.txt' with open(CATEGORIES_FILE, 'r') as f: categories = f.read().split('\n') def predict(input): print("Start predicting ...") devices = mvnc.EnumerateDevices() device = mvnc.Device(devices[0]) device.OpenDevice() # Load graph file data with open('./models/graph', 'rb') as f: graph_file_buffer = f.read() # Initialize a Graph object graph = device.AllocateGraph(graph_file_buffer) predict = [] start = time.time() for i in range(len(input)): # Write the tensor to the input_fifo and queue an inference graph.LoadTensor(input[i], None) output, userobj = graph.GetResult() predict.append(np.argmax(output)) stop = time.time() for i in predict: print(categories[i], end=' ', flush=True) print('') print("Time : {0} ({1} images)".format(stop-start, len(input))) graph.DeallocateGraph() device.CloseDevice() return output if __name__ == '__main__': print("Image path : {0}".format(os.path.join(IMAGE_DIR_NAME, '*.jpg or *.png'))) jpg_list = glob.glob(os.path.join(IMAGE_DIR_NAME, '*.jpg')) jpg_list += glob.glob(os.path.join(IMAGE_DIR_NAME, '*.png')) if not len(jpg_list): print("No image file") sys.exit() jpg_list.reverse() print([i.split('/')[-1] for i in jpg_list][:10]) img_list = [] for n in jpg_list: image = cv2.imread(n) image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) image = cv2.resize(image, (224, 224)) img_list.append(image) img_list = np.asarray(img_list) * (1.0/255.0) #img_list = np.reshape(img_list, [-1, 784]) print("imgshape ", img_list.shape) predict(img_list.astype(np.float16))

Fine-Tuning InceptionV3

ImageNetで学習済みのInceptionV3をCaltech101にFine-Tuningする。

サイトに従ってFine-Tuning

参考 http://ronny.rest/blog/post_2017_10_13_tf_transfer_learning/

やってみる。

Inception V3の学習済みモデルはいつも通り https://github.com/tensorflow/models/tree/master/research/slim から http://download.tensorflow.org/models/inception_v3_2016_08_28.tar.gzls を選択

入出力

入力: Caltech101

出力:101

バッチサイズ:32

結果

できた

Initializing from Pretrained Weights

INFO:tensorflow:Restoring parameters from ./models/inception_v3.ckpt

----------------------------------------------

EPOCH 1/20

100%|██████████| 216/216 [01:45<00:00, 2.41it/s]

100%|██████████| 54/54 [00:07<00:00, 8.15it/s]

step: 53 loss: 0.3671 val accuracy: 0.8681

----------------------------------------------

EPOCH 2/20

100%|██████████| 216/216 [01:29<00:00, 2.41it/s]

100%|██████████| 54/54 [00:06<00:00, 8.13it/s]

step: 53 loss: 0.2679 val accuracy: 0.9236

----------------------------------------------

EPOCH 19/20

100%|██████████| 216/216 [01:31<00:00, 2.34it/s]

100%|██████████| 54/54 [00:06<00:00, 7.97it/s]

step: 53 loss: 0.2040 val accuracy: 0.9659

----------------------------------------------

EPOCH 20/20

100%|██████████| 216/216 [01:31<00:00, 2.37it/s]

100%|██████████| 54/54 [00:06<00:00, 8.05it/s]

step: 53 loss: 0.2002 val accuracy: 0.9653

ソースコード

サイトに従ったコードをナンバー用に変更したもの

#!/usr/bin/env python # coding: utf-8 # In[1]: import os,time os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim import tensorflow.contrib.slim.nets #from __future__ import print_function, division import loadimg from tqdm import tqdm import matplotlib.pyplot as plt start = time.time() # In[2]: np_aryname = './models/data{0}.npy' SAVE = False if SAVE: X_train, Y_train, X_test, Y_test, number_of_classes = loadimg.loadimg( '/home/tokunn/caltech101') np.save(np_aryname.format('X_train'), X_train) np.save(np_aryname.format('Y_train'), Y_train) np.save(np_aryname.format('X_test'), X_test) np.save(np_aryname.format('Y_test'), Y_test) np.save(np_aryname.format('number_of_classes'), number_of_classes) else: # LOAD X_train = np.load(np_aryname.format('X_train')) Y_train = np.load(np_aryname.format('Y_train')) X_test = np.load(np_aryname.format('X_test')) Y_test = np.load(np_aryname.format('Y_test')) number_of_classes = np.load(np_aryname.format('number_of_classes')) print("X_train", X_train.shape) print("Y_train", Y_train.shape) print("X_test", X_test.shape) print("Y_test", Y_test.shape) print("Number of Classes", number_of_classes) # In[3]: SNAPSHOT_FILE = "./models/snapshot.ckpt" PRETRAINED_SNAPSHOT_FILE = "./models/inception_v3.ckpt" # somewhere to store the tensorboard files - to visualise the graph TENSORBOARD_DIR = "logs" # IMAGE SETTINGS IMG_WIDTH, IMG_HEIGHT = [299,299] # Dimensions required by inception V3 N_CHANNELS = 3 # Number of channels required by inception V3 N_CLASSES = number_of_classes # Change N_CLASSES to suit your needs # In[4]: graph = tf.Graph() with graph.as_default(): # INPUTS with tf.name_scope("inputs") as scope: input_dims = (None, IMG_HEIGHT, IMG_WIDTH, N_CHANNELS) tf_X = tf.placeholder(tf.float32, shape=input_dims, name="X") tf_Y = tf.placeholder(tf.int32, shape=[None], name="Y") tf_alpha = tf.placeholder_with_default(0.001, shape=None, name="alpha") tf_is_training = tf.placeholder_with_default(False, shape=None, name="is_training") # PREPROCESSING STEPS with tf.name_scope("preprocess") as scope: scaled_inputs = tf.div(tf_X, 255., name="rescaled_inputs") # BODY arg_scope = tf.contrib.slim.nets.inception.inception_v3_arg_scope() with tf.contrib.framework.arg_scope(arg_scope): tf_logits, end_points = tf.contrib.slim.nets.inception.inception_v3( scaled_inputs, num_classes=N_CLASSES, is_training=tf_is_training, dropout_keep_prob=0.8) # PREDICTIONS tf_preds = tf.to_int32(tf.argmax(tf_logits, axis=-1), name="preds") # LOSS - Sums all losses (even Regularization losses) with tf.variable_scope('loss') as scope: unrolled_labels = tf.reshape(tf_Y, (-1,)) tf.losses.sparse_softmax_cross_entropy(labels=unrolled_labels, logits=tf_logits) tf_loss = tf.losses.get_total_loss() # OPTIMIZATION - Also updates batchnorm operations automatically with tf.variable_scope('opt') as scope: tf_optimizer = tf.train.AdamOptimizer(tf_alpha, name="optimizer") update_ops = tf.get_collection(tf.GraphKeys.UPDATE_OPS) # for batchnorm with tf.control_dependencies(update_ops): tf_train_op = tf_optimizer.minimize(tf_loss, name="train_op") # Evalution with tf.variable_scope('eval') as scope: y = tf.nn.softmax(tf_logits, name='softmax') accuracy = tf.reduce_mean( tf.cast(tf.equal(tf.argmax(y, 1), tf.cast(tf_Y, tf.int64)), tf.float32) ) # PRETRAINED SAVER SETTINGS # Lists of scopes of weights to include/exclude from pretrained snapshot pretrained_include = ["InceptionV3"] pretrained_exclude = ["InceptionV3/AuxLogits", "InceptionV3/Logits"] # PRETRAINED SAVER - For loading pretrained weights on the first run pretrained_vars = tf.contrib.framework.get_variables_to_restore( include=pretrained_include, exclude=pretrained_exclude) tf_pretrained_saver = tf.train.Saver(pretrained_vars, name="pretrained_saver") # MAIN SAVER - For saving/restoring your complete model tf_saver = tf.train.Saver(name="saver") # TENSORBOARD - To visialize the architecture with tf.variable_scope('tensorboard') as scope: tf_summary_writer = tf.summary.FileWriter(TENSORBOARD_DIR, graph=graph) tf_dummy_summary = tf.summary.scalar(name="dummy", tensor=1) # In[5]: def initialize_vars(session): # INITIALIZE VARS if False: #tf.train.checkpoint_exists(SNAPSHOT_FILE): print(" Loading from Main Checkpoint") tf_saver.restore(session, SNAPSHOT_FILE) else: print("Initializing from Pretrained Weights") session.run(tf.global_variables_initializer()) tf_pretrained_saver.restore(session, PRETRAINED_SNAPSHOT_FILE) # In[ ]: with tf.Session(graph=graph) as sess: n_epochs = 20 print_every = 32 batch_size = 32 # small batch size so inception v3 can be run on laptops steps_per_epoch = len(X_train)//batch_size steps_per_epoch_val = len(X_test)//batch_size initialize_vars(session=sess) for epoch in range(n_epochs): print("----------------------------------------------", flush=True) print("EPOCH {}/{}".format(epoch+1, n_epochs), flush=True, end=' ') #print("----------------------------------------------", flush=True) for step in tqdm(range(steps_per_epoch)): # EXTRACT A BATCH OF TRAINING DATA X_batch = X_train[batch_size*step: batch_size*(step+1)] Y_batch = Y_train[batch_size*step: batch_size*(step+1)] # RUN ONE TRAINING STEP - feeding batch of data feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: True} loss, _ = sess.run([tf_loss, tf_train_op], feed_dict=feed_dict) val_accuracy = [] for step in tqdm(range(steps_per_epoch_val)): # EXTRACT A BATCH OF TEST DATA X_batch = X_test[batch_size*step: batch_size*(step+1)] Y_batch = Y_test[batch_size*step: batch_size*(step+1)] # Evalution feed_dict = {tf_X: X_batch, tf_Y: Y_batch, tf_alpha:0.0001, tf_is_training: False} val_accuracy.append(accuracy.eval(feed_dict=feed_dict)) # PRINT FEED BACK - once every `print_every` steps total_val_accuracy = np.average(np.asarray(val_accuracy)) print("\tstep: {: 4d} loss: {:0.4f} val accuracy: {:0.4f}".format( step, loss, total_val_accuracy)) plt.plot(sess.run(tf_logits, feed_dict = { tf_X: [X_test[0]], tf_Y: [Y_test[0]], tf_is_training: False })[0]) # SAVE SNAPSHOT - after each epoch tf_saver.save(sess, SNAPSHOT_FILE) # In[ ]: end = time.time() print("Time : {0}".format(end-start)) # In[ ]: plt.show()

TensorFlow Slim (TF-Slim)で書いたモデルをMovidiusで動かす & 蒸留もどき

TF-Slimとは

TensorFlow Low Layerのマクロみたいなもの 。 比較的簡単に書けるようになる。

変数の定義

weights = slim.model_variable('weights', shape=[10, 10, 3 , 3]) my_var = slim.variable('my_var', shape=[20, 1], initializer=tf.zeros_initializer())

レイヤの追加

net = slim.conv2d(input, 128, [3, 3], scope='conv1_1')

| Layer | TF-Slim |

|---|---|

| BiasAdd | slim.bias_add |

| BatchNorm | slim.batch_norm |

| Conv2d | slim.conv2d |

| Conv2dInPlane | slim.conv2d_in_plane |

| Conv2dTranspose (Deconv) | slim.conv2d_transpose |

| FullyConnected | slim.fully_connected |

| AvgPool2D | slim.avg_pool2d |

| Dropout | slim.dropout |

| Flatten | slim.flatten |

| MaxPool2D | slim.max_pool2d |

| OneHotEncoding | slim.one_hot_encoding |

| SeparableConv2 | slim.separable_conv2d |

| UnitNorm | slim.unit_norm |

サンプル

import numpy as np import tensorflow as tf from tensorflow.contrib.slim.nets import inception slim = tf.contrib.slim def run(name, image_size, num_classes): with tf.Graph().as_default(): image = tf.placeholder("float", [1, image_size, image_size, 3], name="input") with slim.arg_scope(inception.inception_v1_arg_scope()): logits, _ = inception.inception_v1(image, num_classes, is_training=False, spatial_squeeze=False) probabilities = tf.nn.softmax(logits) init_fn = slim.assign_from_checkpoint_fn('inception_v1.ckpt', slim.get_model_variables('InceptionV1')) with tf.Session() as sess: init_fn(sess) saver = tf.train.Saver(tf.global_variables()) saver.save(sess, "output/"+name) run('inception-v1', 224, 1001)

TF-Slim を用いた蒸留

拾ってきたソースコードつなぎ合わせて無理やり動かしたらかろうじて動いたレベル

ナンバープレート画像を用いて全結合のみに蒸留

蒸留したものをMovidiusに変換

graphでoutput nodeを確認する

fw = tf.summary.FileWriter('logs', sess.graph)

fw.close()

tensorboard --logdir logs

コンパイル

mvNCCompile -s 12 student_flozen.ckpt.meta -in=input -on=output -o graph

実行

tokunn@nanase 1:18:01 [~/Documents/distil_mnist/second_challenge] $ python3 movidius.py /home/tokunn/make_image/test/3186 2>/dev/null Image path : /home/tokunn/make_image/test/3186/*.jpg or *.png ['extend_5_0_5934.png', 'extend_9_0_1460.png', 'extend_9_0_6437.png', 'extend_9_0_733.png', 'extend_13_0_8860.png', 'extend_5_0_1296.png', 'extend_5_0_320.png', 'extend_5_0_2227.png', 'extend_5_0_6957.png', 'extend_1_0_2447.png'] imgshape (25, 784) Start prediting ... 1 7 7 7 6 7 7 7 7 8 1 7 7 7 7 7 7 2 2 7 7 3 9 1 2 Time : 0.10333132743835449 (25 images)

思ったよりすんなり動いた

ソースコード

ナンバー親子

#!/usr/bin/env python # coding: utf-8 # In[1]: import os os.environ['TF_CPP_MIN_LOG_LEVEL']='2' os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim from tensorflow.examples.tutorials.mnist import input_data import loadimg # In[2]: #config = tf.ConfigProto() #config.gpu_options.per_process_gpu_memory_fraction = 0.25 #sess = tf.Session(config=config) config = tf.ConfigProto( gpu_options=tf.GPUOptions( visible_device_list="1", # specify GPU number allow_growth=False ) ) #sess = tf.Session(config=config) # In[3]: NUMBER_OF_CLASS = 10 # In[4]: def MnistNetworkTeacher(input,keep_prob_conv,keep_prob_hidden,scope='Mnist',reuse = False): with tf.variable_scope(scope,reuse = reuse) as sc : with slim.arg_scope([slim.conv2d], kernel_size = [3,3], stride = [1,1], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu): net = slim.conv2d(input, 32, scope='conv1') net = slim.max_pool2d(net,[2, 2], 2, scope='pool1') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 64,scope='conv2') net = slim.max_pool2d(net,[2, 2], 2, scope='pool2') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 128,scope='conv3') net = slim.max_pool2d(net,[2, 2], 2, scope='pool3') net = tf.nn.dropout(net, keep_prob_conv) net = slim.flatten(net) with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu) : net = slim.fully_connected(net,625,scope='fc1') net = tf.nn.dropout(net, keep_prob_hidden) net = slim.fully_connected(net,NUMBER_OF_CLASS,activation_fn=None,scope='fc2') net = tf.nn.softmax(net/temperature) return net # In[5]: def MnistNetworkStudent(input,scope='Mnist',reuse = False): with tf.variable_scope(scope,reuse = reuse) as sc : with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.sigmoid): net = slim.fully_connected(input,1000,scope = 'fc1') net = slim.fully_connected(net, NUMBER_OF_CLASS, activation_fn = None, scope = 'fc2') return net # In[6]: def loss(prediction,output,temperature = 1): cross_entropy = tf.reduce_mean(-tf.reduce_sum( output * tf.log(tf.clip_by_value(prediction,1e-10,1.0)), reduction_indices=[1])) correct_prediction = tf.equal(tf.argmax(prediction,1), tf.argmax(output,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) return cross_entropy,accuracy # In[7]: eps = 0.1 alpha = 0.5 temperature = 1 start_lr = 1e-4 decay = 1e-6 # In[8]: with tf.Graph().as_default(): x = tf.placeholder(tf.float32, shape=[None, 784], name='input') y_ = tf.placeholder(tf.float32, shape=[None, NUMBER_OF_CLASS]) keep_prob_conv = tf.placeholder(tf.float32) keep_prob_hidden = tf.placeholder(tf.float32) x_image = tf.reshape(x, [-1,28,28,1]) y_conv_teacher=MnistNetworkTeacher(x_image,keep_prob_conv, keep_prob_hidden,scope = 'teacher') y_conv = MnistNetworkStudent(x,scope = 'student') y_conv_student = tf.nn.softmax(y_conv/temperature) y_conv_student_actual = tf.nn.softmax(y_conv) cross_entropy_teacher, accuracy_teacher=loss(y_conv_teacher, y_, temperature = temperature) student_loss1, accuracy_student = loss(y_conv_student_actual, y_, temperature = temperature) student_loss2 = tf.reduce_mean( - tf.reduce_sum(y_conv_teacher * tf.log(tf.clip_by_value(y_conv_student, 1e-10,1.0)), reduction_indices=1) ) cross_entropy_student = student_loss1 + student_loss2 model_vars = tf.trainable_variables() var_teacher = [var for var in model_vars if 'teacher' in var.name] var_student = [var for var in model_vars if 'student' in var.name] grad_teacher = tf.gradients(cross_entropy_teacher,var_teacher) grad_student = tf.gradients(cross_entropy_student,var_student) l_rate = tf.placeholder(shape=[],dtype = tf.float32) trainer = tf.train.RMSPropOptimizer(learning_rate = l_rate) trainer1 = tf.train.GradientDescentOptimizer(0.1) train_step_teacher = trainer.apply_gradients(zip(grad_teacher,var_teacher)) train_step_student = trainer1.apply_gradients(zip(grad_student,var_student)) sess = tf.InteractiveSession(config=config) sess.run(tf.global_variables_initializer()) saver1 = tf.train.Saver(var_teacher) saver2 = tf.train.Saver(var_student) # In[9]: #mnist = input_data.read_data_sets("MNIST_data/", one_hot=True) x_train, y_train, x_test, y_test, class_count = loadimg.loadimg( '/home/tokunn/make_image/', NUMBER_OF_CLASS ) # In[10]: for i in range(10000): #batch = mnist.train.next_batch(128) s = 128*i % len(x_train) batch = [x_train[s:s+128], y_train[s:s+128]] lr = start_lr * 1.0/(1.0 + i*decay) if i%100 ==0: train_accuracy = accuracy_teacher.eval(feed_dict={x:x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("step %d, training accuracy %g,"%(i, train_accuracy)) train_step_teacher.run(feed_dict={x: batch[0], y_: batch[1], keep_prob_conv :0.8, #keep_prob_hidden:0.5}) keep_prob_hidden:0.5, l_rate:lr}) saver1.save(sess,'./models/teacher1.ckpt') print('*'*20) for i in range(30000): #batch = mnist.train.next_batch(100) s = 128*i % len(x_train) batch = [x_train[s:s+100], y_train[s:s+100]] if i%100 == 0: train_accuracy = accuracy_student.eval(feed_dict={x:x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("step %d, training accuracy %g"%(i, train_accuracy)) train_step_student.run(feed_dict={x: batch[0], y_: batch[1], keep_prob_conv :1.0, keep_prob_hidden:1.0}) saver2.save(sess,'./models/student.ckpt') # In[11]: test_acc = sess.run(accuracy_student,feed_dict={x: x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("test accuracy of the student model is %g "%(test_acc)) # In[13]: fw = tf.summary.FileWriter('logs', sess.graph) fw.close() # In[ ]:

loadimg

#!/usr/bin/env python2 import os import numpy as np import tensorflow as tf from keras.preprocessing.image import load_img, img_to_array from keras.utils import np_utils import matplotlib.pyplot as plt import glob from sklearn.model_selection import train_test_split IMGSIZE = 28 IMGSIZE = 28 def loadimg_one(DIRPATH, NUM): x = [] y = [] img_list = os.listdir(DIRPATH) if (NUM) and (len(img_list) > NUM): img_list = img_list[:NUM] #print("[loadimg] : img_list : ", end=' ') #print(img_list) img_count = 0 for number in img_list: dirpath = os.path.join(DIRPATH, number) dirpic_list = glob.glob(os.path.join(dirpath, '*.jpg')) dirpic_list += glob.glob(os.path.join(dirpath, '*.png')) for picture in dirpic_list: img = img_to_array(load_img(picture, color_mode = "grayscale", target_size=(IMGSIZE, IMGSIZE))) x.append(img) y.append(img_count) #print("Load {0} : {1}".format(picture, img_count)) img_count += 1 output_count = img_count x = np.asarray(x) x = x.astype('float32') x = x/255.0 y = np.asarray(y, dtype=np.int32) y = np_utils.to_categorical(y, output_count) return x, y, output_count def loadimg(COMMONDIR='./', NUM=None): print("########## loadimg ########") #COMMONDIR = './make_image' TRAINDIR = os.path.join(COMMONDIR, 'train') TESTDIR = os.path.join(COMMONDIR, 'test') x_train, y_train, class_count = loadimg_one(TRAINDIR, NUM) x_test, y_test, _ = loadimg_one(TESTDIR, NUM) #for i in range(0, x_test.shape[0]): # plt.imshow(x_test[i]) # plt.show() x = np.concatenate((x_train, x_test)) x = np.reshape(x, [-1, 784]) y = np.concatenate((y_train, y_test)) print("x_train, y_train, x_test, y_test, class_count") print("x_train shape : ", x_train.shape) print("########## END of loadimg ########") x_train, x_test, y_train, y_test = train_test_split(x, y,train_size=0.2, test_size=0.8) return x_train, y_train, x_test, y_test, class_count if __name__ == '__main__': loadimg()

コード変換

#!/usr/bin/env python # coding: utf-8 # In[1]: import os os.environ['TF_CPP_MIN_LOG_LEVEL']='2' import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim from tensorflow.examples.tutorials.mnist import input_data import loadimg # In[2]: NUMBER_OF_CLASS = 10 # In[3]: def MnistNetworkStudent(input,scope='Mnist',reuse = False): with tf.variable_scope(scope,reuse = reuse) as sc : with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.sigmoid): net = slim.fully_connected(input,1000,scope = 'fc1') net = slim.fully_connected(net, NUMBER_OF_CLASS, activation_fn = None, scope = 'fc2') return net # In[4]: eps = 0.1 alpha = 0.5 temperature = 1 start_lr = 1e-4 decay = 1e-6 # In[5]: with tf.Graph().as_default(): x = tf.placeholder(tf.float32, shape=[None, 784], name='input') x_image = tf.reshape(x, [-1,28,28,1]) y_conv = MnistNetworkStudent(x,scope = 'student') y_conv_student = tf.nn.softmax(y_conv/temperature, name='output_temp') y_conv_student_actual = tf.nn.softmax(y_conv, name='output') model_vars = tf.trainable_variables() var_student = [var for var in model_vars if 'student' in var.name] sess = tf.InteractiveSession() sess.run(tf.global_variables_initializer()) sess.run(tf.local_variables_initializer()) saver2 = tf.train.Saver(var_student) # In[6]: saver2.restore(sess, './models/student.ckpt') saver2.save(sess,'./models/student_flozen.ckpt') # In[7]: fw = tf.summary.FileWriter('logs', sess.graph) fw.close()

Movidius 予測

import mvnc.mvncapi as mvnc import numpy as np from PIL import Image import cv2 import time, sys, os import glob IMAGE_DIR_NAME = '/home/tokunn/make_image/' if (len(sys.argv) > 1): IMAGE_DIR_NAME = sys.argv[1] #IMAGE_DIR_NAME = 'github_deep_mnist/ncappzoo/data/digit_images' def predict(input): print("Start prediting ...") devices = mvnc.EnumerateDevices() device = mvnc.Device(devices[0]) device.OpenDevice() # Load graph file data with open('./models/graph', 'rb') as f: graph_file_buffer = f.read() # Initialize a Graph object graph = device.AllocateGraph(graph_file_buffer) start = time.time() for i in range(len(input)): # Write the tensor to the input_fifo and queue an inference graph.LoadTensor(input[i], None) output, userobj = graph.GetResult() print(np.argmax(output), end=' ') stop = time.time() print('') print("Time : {0} ({1} images)".format(stop-start, len(input))) graph.DeallocateGraph() device.CloseDevice() return output if __name__ == '__main__': print("Image path : {0}".format(os.path.join(IMAGE_DIR_NAME, '*.jpg or *.png'))) jpg_list = glob.glob(os.path.join(IMAGE_DIR_NAME, '*.jpg')) jpg_list += glob.glob(os.path.join(IMAGE_DIR_NAME, '*.png')) if not len(jpg_list): print("No image file") sys.exit() jpg_list.reverse() print([i.split('/')[-1] for i in jpg_list][:10]) img_list = [] for n in jpg_list: image = cv2.imread(n) image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) image = cv2.resize(image, (28, 28)) img_list.append(image) img_list = np.asarray(img_list) * (1.0/255.0) img_list = np.reshape(img_list, [-1, 784]) print("imgshape ", img_list.shape) predict(img_list.astype(np.float16))

ソースコード2

[None, 28, 28, 3]で入力

#!/usr/bin/env python # coding: utf-8 # In[1]: import os os.environ['TF_CPP_MIN_LOG_LEVEL']='2' os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID" os.environ["CUDA_VISIBLE_DEVICES"] = "0" import tensorflow as tf import numpy as np import tensorflow.contrib.slim as slim from tensorflow.examples.tutorials.mnist import input_data import loadimg # In[2]: NUMBER_OF_CLASS = 10 # In[3]: def MnistNetworkTeacher(input,keep_prob_conv,keep_prob_hidden,scope='Mnist',reuse = False): with tf.variable_scope(scope,reuse = reuse) as sc : with slim.arg_scope([slim.conv2d], kernel_size = [3,3], stride = [1,1], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu): net = slim.conv2d(input, 32, scope='conv1') net = slim.max_pool2d(net,[2, 2], 2, scope='pool1') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 64,scope='conv2') net = slim.max_pool2d(net,[2, 2], 2, scope='pool2') net = tf.nn.dropout(net, keep_prob_conv) net = slim.conv2d(net, 128,scope='conv3') net = slim.max_pool2d(net,[2, 2], 2, scope='pool3') net = tf.nn.dropout(net, keep_prob_conv) net = slim.flatten(net) with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.relu) : net = slim.fully_connected(net,625,scope='fc1') net = tf.nn.dropout(net, keep_prob_hidden) net = slim.fully_connected(net,NUMBER_OF_CLASS,activation_fn=None,scope='fc2') net = tf.nn.softmax(net/temperature) return net # In[4]: def MnistNetworkStudent(input,scope='Mnist',reuse = False): with tf.variable_scope(scope,reuse = reuse) as sc : with slim.arg_scope([slim.fully_connected], biases_initializer=tf.constant_initializer(0.0), activation_fn=tf.nn.sigmoid): net = slim.fully_connected(input,1000,scope = 'fc1') net = slim.fully_connected(net, NUMBER_OF_CLASS, activation_fn = None, scope = 'fc2') return net # In[5]: def loss(prediction,output,temperature = 1): cross_entropy = tf.reduce_mean(-tf.reduce_sum( output * tf.log(tf.clip_by_value(prediction,1e-10,1.0)), reduction_indices=[1])) correct_prediction = tf.equal(tf.argmax(prediction,1), tf.argmax(output,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) return cross_entropy,accuracy # In[6]: eps = 0.1 alpha = 0.5 temperature = 1 start_lr = 1e-4 decay = 1e-6 # In[7]: with tf.Graph().as_default(): x = tf.placeholder(tf.float32, shape=[None, 28,28,1], name='input') y_ = tf.placeholder(tf.float32, shape=[None, NUMBER_OF_CLASS]) keep_prob_conv = tf.placeholder(tf.float32) keep_prob_hidden = tf.placeholder(tf.float32) x_line = tf.reshape(x, [-1,784]) y_conv_teacher=MnistNetworkTeacher(x,keep_prob_conv, keep_prob_hidden,scope = 'teacher') y_conv = MnistNetworkStudent(x_line,scope = 'student') y_conv_student = tf.nn.softmax(y_conv/temperature) y_conv_student_actual = tf.nn.softmax(y_conv) cross_entropy_teacher, accuracy_teacher=loss(y_conv_teacher, y_, temperature = temperature) student_loss1, accuracy_student = loss(y_conv_student_actual, y_, temperature = temperature) student_loss2 = tf.reduce_mean( - tf.reduce_sum(y_conv_teacher * tf.log(tf.clip_by_value(y_conv_student, 1e-10,1.0)), reduction_indices=1) ) cross_entropy_student = student_loss1 + student_loss2 model_vars = tf.trainable_variables() var_teacher = [var for var in model_vars if 'teacher' in var.name] var_student = [var for var in model_vars if 'student' in var.name] grad_teacher = tf.gradients(cross_entropy_teacher,var_teacher) grad_student = tf.gradients(cross_entropy_student,var_student) l_rate = tf.placeholder(shape=[],dtype = tf.float32) trainer = tf.train.RMSPropOptimizer(learning_rate = l_rate) trainer1 = tf.train.GradientDescentOptimizer(0.1) train_step_teacher = trainer.apply_gradients(zip(grad_teacher,var_teacher)) train_step_student = trainer1.apply_gradients(zip(grad_student,var_student)) sess = tf.InteractiveSession() sess.run(tf.global_variables_initializer()) saver1 = tf.train.Saver(var_teacher) saver2 = tf.train.Saver(var_student) # In[8]: #mnist = input_data.read_data_sets("MNIST_data/", one_hot=True) x_train, y_train, x_test, y_test, class_count = loadimg.loadimg( '/home/tokunn/make_image/', NUMBER_OF_CLASS ) # In[9]: for i in range(10000): #batch = mnist.train.next_batch(128) s = 128*i % len(x_train) batch = [x_train[s:s+128], y_train[s:s+128]] lr = start_lr * 1.0/(1.0 + i*decay) if i%100 ==0: train_accuracy = accuracy_teacher.eval(feed_dict={x:x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("step %d, training accuracy %g,"%(i, train_accuracy)) train_step_teacher.run(feed_dict={x: batch[0], y_: batch[1], keep_prob_conv :0.8, #keep_prob_hidden:0.5}) keep_prob_hidden:0.5, l_rate:lr}) saver1.save(sess,'./models/teacher1.ckpt') print('*'*20) for i in range(30000): #batch = mnist.train.next_batch(100) s = 128*i % len(x_train) batch = [x_train[s:s+100], y_train[s:s+100]] if i%100 == 0: train_accuracy = accuracy_student.eval(feed_dict={x:x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("step %d, training accuracy %g"%(i, train_accuracy)) train_step_student.run(feed_dict={x: batch[0], y_: batch[1], keep_prob_conv :1.0, keep_prob_hidden:1.0}) saver2.save(sess,'./models/student.ckpt') # In[10]: test_acc = sess.run(accuracy_student,feed_dict={x: x_test, y_: y_test, keep_prob_conv: 1.0, keep_prob_hidden: 1.0}) print("test accuracy of the student model is %g "%(test_acc)) # In[11]: fw = tf.summary.FileWriter('logs', sess.graph) fw.close()

TensorFlow Model Zooにある学習済みモデルをMovidiusで動かす( Inception-V3とMobileNet V1)

方法

ここに書いてある。 https://movidius.github.io/ncsdk/tf_modelzoo.html

ソースを落としてくる。

git clone https://github.com/tensorflow/tensorflow.git git clone https://github.com/tensorflow/models.git

学習済みのチェックポイントを落としてくる。

wget -nc http://download.tensorflow.org/models/inception_v3_2016_08_28.tar.gz tar -xvf inception_v3_2016_08_28.tar.gz

GraphDefファイルを出力。

python3 ../models/research/slim/export_inference_graph.py \

--alsologtostderr \

--model_name=inception_v3 \

--batch_size=1 \

--dataset_name=imagenet \

--image_size=299 \

--output_file=inception_v3.pb

グラフのフリーズ。

python3 ../tensorflow/tensorflow/python/tools/freeze_graph.py \

--input_graph=inception_v3.pb \

--input_binary=true \

--input_checkpoint=inception_v3.ckpt \

--output_graph=inception_v3_frozen.pb \

--output_node_name=InceptionV3/Predictions/Reshape_1

mvNCCompile -s 12 inception_v3_frozen.pb -in=input -on=InceptionV3/Predictions/Reshape_1

やってみる

フリーズの工程にて

tokunn@tokunn-VirtualBox 16:13:16 [~/Documents/MovidiusTensorflow/use_modelzoo/inceptionV3] $ python3 ~/Documents/source/tensorflow/tensorflow/python/tools/freeze_graph.py \

> --input_graph=inception_v3.pb \

--input_binary=true \

--input_checkpoint=inception_v3.ckpt \

--output_graph=inception_v3_frozen.pb \

--output_node_name=InceptionV3/Predictions/Reshape_1

Traceback (most recent call last):

File "/home/tokunn/Documents/source/tensorflow/tensorflow/python/tools/freeze_graph.py", line 58, in <module>

from tensorflow.python.training import checkpoint_management

ImportError: cannot import name 'checkpoint_management'

動かない。

バグらしい。 https://github.com/tensorflow/tensorflow/issues/22019

いつも通りパッチを当てる。

58d57 < from tensorflow.python.training import checkpoint_management 59a59 > import tensorflow as tf 127c127 < not checkpoint_management.checkpoint_exists(input_checkpoint)): --- > not tf.train.checkpoint_exists(input_checkpoint)):

無事にfrozenなのが出力された。

mvNCCheckで

tokunn@nanase 7:41:25 [~] $ mvNCCheck -s 12 inception_v3_frozen.pb -in=input -on=InceptionV3/Predictions/Reshape_1 2>/dev/null mvNCCheck v02.00, Copyright @ Movidius Ltd 2016 Result: (1, 1, 1001) 1) 22 0.1576 2) 93 0.1223 3) 95 0.0448 4) 23 0.03558 5) 24 0.02771 Expected: (1, 1001) 1) 22 0.1599765 2) 93 0.12189513 3) 95 0.04604088 4) 23 0.03503471 5) 24 0.02783106 ------------------------------------------------------------ Obtained values ------------------------------------------------------------ Obtained Min Pixel Accuracy: 1.4900462701916695% (max allowed=2%), Pass Obtained Average Pixel Accuracy: 0.01522512175142765% (max allowed=1%), Pass Obtained Percentage of wrong values: 0.0% (max allowed=0%), Pass Obtained Pixel-wise L2 error: 0.06495287965302322% (max allowed=1%), Pass Obtained Global Sum Difference: 0.02438097447156906 ------------------------------------------------------------

問題なく動作した。

ほかのネットワークもやってみる (Mobilent V1)

ネットワークのリスト https://github.com/tensorflow/models/tree/master/research/slim/nets

重みのリスト https://github.com/tensorflow/models/tree/master/research/slim

モデルの準備

ネットワーク名はmobilenet_v1

重みは http://download.tensorflow.org/models/mobilenet_v1_2018_02_22/mobilenet_v1_0.5_160.tgz

なんか重みをダウンロードしたらmobilenet_v1_0.5_160_frozen.pbも入ってた。

あとはコンパイルするだけ。

インプット/アウトプットノードの捜索

.pbファイルからノードを探す。

コードは添付。

結果

tokunn@tokunn-VirtualBox 17:02:17 [~/Documents/MovidiusTensorflow/use_modelzoo/mobilenet_v1/getnodename_pb.py] $ python3 getnodename_pb.py | grep input input , Placeholder tokunn@tokunn-VirtualBox 17:03:10 [~/Documents/MovidiusTensorflow/use_modelzoo/mobilenet_v1/getnodename_pb.py] $ python3 getnodename_pb.py | grep Predictions MobilenetV1/Predictions/Reshape_1 , Reshape

おそらくこのinputとMobilenetV1/Predictions/Reshape_1であろう。

コンパイル

ここまでは素直に来たのでコンパイル。

mvNCCompile -s 12 mobilenet_v1_0.5_160_frozen.pb -in=input -on=MobilenetV1/Predictions/Reshape_1

やっぱり終わらないエラーとの闘い。

tokunn@tokunn-VirtualBox 17:10:23 [~/Documents/MovidiusTensorflow/use_modelzoo/mobilenet_v1] $ mvNCCompile -s 12 mobilenet_v1_0.5_160_frozen.pb -in=input -on=MobilenetV1/Predictions/Reshape_1

mvNCCompile v02.00, Copyright @ Movidius Ltd 2016

/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/framework/ops.py:923: DeprecationWarning: builtin type EagerTensor has no __module__ attribute

EagerTensor = c_api.TFE_Py_InitEagerTensor(_EagerTensorBase)

/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/util/tf_inspect.py:75: DeprecationWarning: inspect.getargspec() is deprecated, use inspect.signature() instead

return _inspect.getargspec(target)

1

Traceback (most recent call last):

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 1278, in _do_call

return fn(*args)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 1263, in _run_fn

options, feed_dict, fetch_list, target_list, run_metadata)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 1350, in _call_tf_sessionrun

run_metadata)

tensorflow.python.framework.errors_impl.InvalidArgumentError: You must feed a value for placeholder tensor 'input' with dtype float and shape [?,160,160,3]

[[Node: input = Placeholder[dtype=DT_FLOAT, shape=[?,160,160,3], _device="/job:localhost/replica:0/task:0/device:CPU:0"]()]]

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/usr/local/bin/mvNCCompile", line 118, in <module>

create_graph(args.network, args.inputnode, args.outputnode, args.outfile, args.nshaves, args.inputsize, args.weights)

File "/usr/local/bin/mvNCCompile", line 104, in create_graph

net = parse_tensor(args, myriad_config)

File "/usr/local/bin/ncsdk/Controllers/TensorFlowParser.py", line 1061, in parse_tensor

desired_shape = node.inputs[1].eval()

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/framework/ops.py", line 680, in eval

return _eval_using_default_session(self, feed_dict, self.graph, session)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/framework/ops.py", line 4951, in _eval_using_default_session

return session.run(tensors, feed_dict)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 877, in run

run_metadata_ptr)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 1100, in _run

feed_dict_tensor, options, run_metadata)

File "/home/tokunn/.local/lib/python3.5/site-packages/tensorflow/python/client/session.py", line 1272, in _do_run

run_metadata)